As I’ve mentioned before, the folks at i>clicker lent me a set of the new i>clicker2 clickers. I had a chance to try them out this week when I filled in for an “Astro 101” instructor. I sure learned a lot in that 50 minutes!

Just to refresh your memory, the i>clicker2 (or “ic2” as it’s also called, which is great because the “>” in “i>clicker2” is messing up some of my HTML) unit has the usual A, B, C, D, E buttons for submitting answers to multiple-choice questions. These new clickers (and receiver and software) also allow for numeric answers and alphanumeric answers. That last feature is particularly interesting because it allows instructors to ask ranking or chronological questions. In the old days, like last week, you could display 5 objects, scenarios or events and ask the student to rank them. But you have to adapt the answers because you have only 5 choices. Something like this:

Rank these [somethings] I, II, III, IV and V from [one end] to [the other]:

A) I, II, V, III, IV

B) II, I, IV, III, IV

C) IV, III, IV, I, II

D) III, I, II, IV, V

E) V, II, I, III, IV

These are killer questions for the students. What are they supposed to do? Work out the ranking on the side and then check that their ranking is in your list? What if their ranking isn’t there? Or game the question and work through each of the choices you give and say “yes” or “no”? There is so much needed to get the answer right besides understanding the concept.

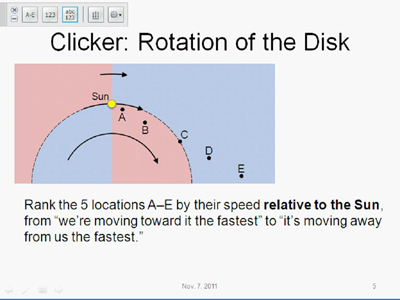

That’s what’s so great about the ic2 alphanumeric mode. I asked this question about how the objects in our Galaxy appear to be moving relative to us:

The alphanumeric mode of the ic2 allows instructors to easily ask ranking tasks like this one about the rotation of the Galaxy.

(Allow me a brief astronomy lesson. At this point in writing this post, I think it’ll be important later. Oh well, can’t hurt, right?)

The stars in our Galaxy orbit around the center. The Galaxy isn’t solid, though. Each star moves along its own path, at its own speed. At this point in the term [psst! we’re setting this up so the students will appreciate what the observed, flat rotation curve means: dark matter] there is a clear pattern: the farther the star is from the center of the Galaxy, the slower its orbital speed. That means stars closer to the center than us are moving faster and will “pass us on the inside lane.” When we observe them, they’re moving away from us. Similarly, we’re moving faster than objects farther from the center than we are, so we’re catching up to the ones ahead of us. Before we pass them, we observe them getting closer to us. That means the answer to my ranking question is EDCAB. Notice that location C is the same distance from the center of the Galaxy as us so it’s moving at the same speed as us. Therefore, we’re not moving towards or away from C — it’s the location where we cross from approaching (blueshifted) to receeding (redshifted).

As usual, I displayed the question, gave the students time to think, and then opened the poll. Students submit a 5-character word like “ABCDE”. The ic2 receiver cycles through the top 3 answers so the instructor can see what the students are thinking without revealing the results to the students.

I saw that there was one popular answer with a couple of other, so I decided enough students got the question right that -pair-share wouldn’t be necessary and displayed the results:

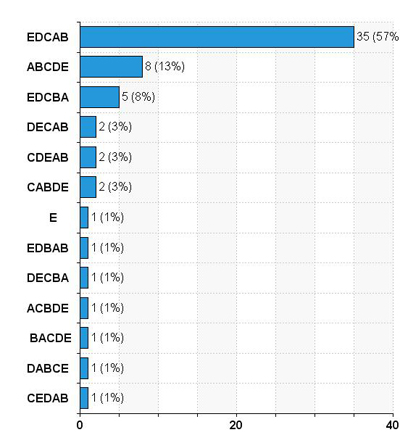

Students' answers for the galaxy rotation ranking task. The first bar, EDCAB, is correct. But what do the others tell you about the students' grasp of the concept?

In hindsight, I think I jumped the gun on that because, and here’s what I’ve been trying to get to in this post, I was unprepared to analyze the results of the poll. I did think far enough ahead to write down the correct answer, EDCAB, in big letters on my lesson plan. But what do the other answers tell us the students’ grasp of the concept?

In a good, multiple-choice question, you know why each correct choice is correct (yes, there can be more one correct choice) and why each incorrect choice is incorrect. When a student selects an incorrect choice, you can diagnose which part of the concept they’ve missed. The agile instructor can get students to -pair-share to reveal, and hopefully correct, their misunderstanding.

I’m sure that agility is possible with ranking tasks. But I hadn’t anticipated it. So I did the best I could on the fly and said something like,

Good, many of you recognized that the objects farther from the center are moving slower, so we’re moving toward them. And away from the stars closer to the center than us.

[It was at this moment I realized I had no idea what the other answers meant!]

Uh, I notice almost everyone put location C at the middle of the list – good. It’s at the same distance and same speed as us, so we’re not moving away from or towards C.

Oh, and ABCDE? You must have ranked them in the opposite order, not the way I clumsily suggested in the question. [Which, you might notice, is not true. Oops.]

[And the other 15% who entered something else? Sorry, folks…]

Uh, okay then, let’s move on…

What am I getting at here? First, these ranking tasks are awesome. Every answer is valid. None of that “I hope my answer is on the list…” And there’s no short-circuiting the answer by giving the students 5 choices, risking them gaming the answer by working backwards. I know there are lots of Astro 101 instructors already using ranking tasks, probably because of the great collection of tasks available at the University of Nebraska-Lincoln, but using them in class typically means distributing worksheets, possibly collecting them, perhaps asking one of those “old-fashioned” ranking task clicker questions. All that hassle is gone with ic2.

But it’s going to take re-training on the part of the instructor to be prepared for the results. In principle, there are 5! = 120 different 5-character words the students can enter. Now, of course, you don’t have anticipate what each of the 119 incorrect answers mean. But here are my recommendations:

- Work out the ranking order ahead of time and write it down, in big letters, where you can see it. It might be easy to remember, “the right answer to this question is choice B” but it’s not easy to remember, “the correct ranking is EDCAB.”

- Work out the ranking if the students rank in the opposite order. That could be because they misread the question or the question wasn’t clear. Or it could diagnose their misunderstanding. For example, if I’d asked them to rank the locations from “most-redshifted” to “most-blueshifted”, the opposite order could mean they’re mixing up red- and blue-shift.

- Think about the common mistakes students make on this question and work out the rankings. And write those down, along with the corresponding mistakes.

- Nothing like hindsight: set up the question so the answer isn’t just 1 swap away from ABCDE. If you had no idea what the answer was, wouldn’t you enter ABCDE?

I hope to try, and write about, some other types of questions with my collection of ic2 clickers. I’ve already tried a demo where students enter their predictions using the numeric mode. But that’s the subject for another post…

Do you use ranking tasks in your class, with ic2 or paper or something else, again? What advice can you offer that will help the instructor be more prepared and agile?

Peter, can the ic2 handle ranking tasks where some of the things are equal to other (e.g., A=B>C=D>E)?

Good question,

JoshJoss. Using just the A, B, C, D, E buttons, there’s no way to enter =, . You’d just have to recognize AB… is equiv to BA… The alphanumeric mode does have some characters besides 0-9, A-Z and can accept up to 15 characters, I think. So students could probably “type” in A=B>C=D>E as a 9-character answer. Entering letters beyond A-E takes a bit of practice and dexterity (though not any more than texting with a num pad on a dumbphone).Instead of “=” and “>”, if spaces are quite easy to put in you could use the format:

“AB CD E” to represent “A=B>C=D>E”

Great idea, Joss! I may have a chance to try ic2 again, this time in a physics class, and might try that out.

Oh, and sorry about calling you “Josh” – our former colleague Josh is coming to UBC for a visit and I was thinking about that :)

Being called Josh is part of my lot in life :)

Hi Peter, thanks for sharing this experience. You’ve done a great job unpacking the challenges and opportunities of ranking questions. I expect others will benefit greatly from this!

The distribution of answers caught my eye. There was certainly one popular answer (the correct one), but looking at the numbers, only 57% of students chose this answer. Since some of these students were probably guessing or at least unsure of their answer, I would bet that less than half of the class really understood the correct answer here. In cases like this, I used to do what you did–skip the peer instruction and go right into the classwide discussion of the question. But if only half the students understand the correct answer, isn’t some peer instruction warranted?

These days, I wouldn’t show students this distribution (since doing so would “give away” the correct answer and inhibit further discussion). Instead, I would tell them that they’re all over the map with this question and have them talk about the question in pairs. After they did, we’d do a second vote, the results of which I would show to the class. Odds are, that 57% would jump to 70 or 80%, maybe even higher.

The kind of distribution you received in this example is deceiving. It looks like there’s consensus around the right answer, but the proportion of students who actually get it is actually on the low side. which warrants some peer instruction. Something to watch out for, ranking question or not.

You’re absolutely right, Derek: I jumped in with an explanation much too early. In the moment of panic described in the post, I fell back to the default instructional method and took over the conversation to lecture them.

As you noted, only 57% got the answer I was looking for, and some of those correct answers could have been by accident or guessing. With barely 1 in 2 students getting it, it would have been the perfect opportunity for them to pair up and discuss the question together. I think between the 2 of them, most pairs would resolve the extraneous problems like getting the ranking in the right direction and figuring out how to use the ic2 clicker. And they could work on the astronomy, too! Yes, like you, I think we could have progressed to much higher success rate after some peer interaction and a second vote.

I’ll certainly look more carefully at the bar graph of poll results next time. With the x-axis auto-scaling, it appears the most popular answer will always fill the graph. It’d be nice if the axis was fixed 0-100%. I’ll mention that to the iclicker folks.

I think I’ll take a mulligan on this one :). I was overwhelmed by what our friend @RogerFreedman called “student data like H2O from a firehose.”

No worries, Peter. I’m with Roger, these free-response questions really do generate a firehose of information! And that requires new forms of agile teaching. It’s nice to see, however, that one response pattern I’ve observed in multiple-choice questions (the deceptively popular response) shows up in free-response questions, as well. Hopefully the discussion here on your blog will help all of us be more ready to respond to this kind of response pattern in the future.