Category Archives: Educational evaluation

Evaluating schools

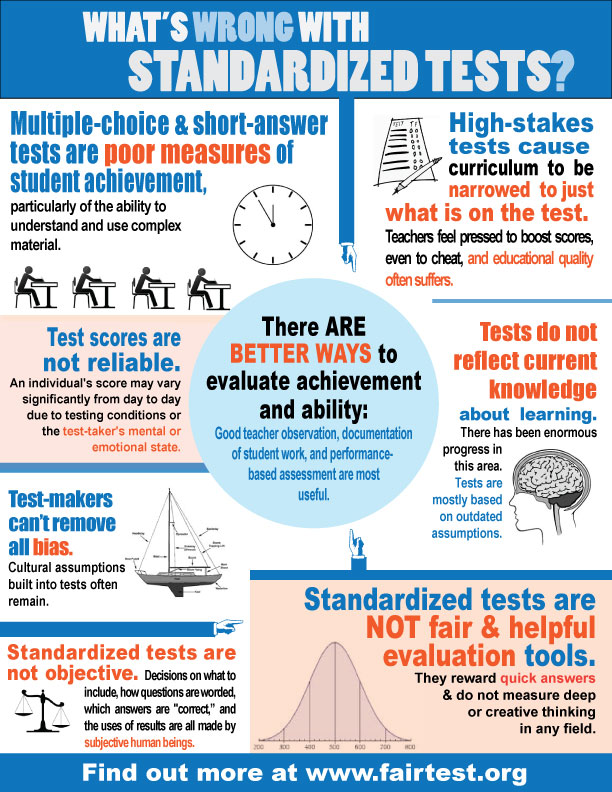

If nothing else, we have learned a great deal about what doesn’t work in terms of evaluating schools. The global penchant for using a few outcomes measures just doesn’t do the trick… this is perhaps most obvious in the USA where judging the quality of schools continues to spiral downward from NCLB to Race to the Top, but around the world we see a similar story. And, we see a few counterpoints, such as the success of the Finnish school system where the focus is decidedly not on standardized outcomes on a few measures.

In British Columbia, Canada where education is a decided provincial matter and where provincial politics can actually lead to quite radical shifts in policies and programmatic initiatives, this is a moment of potential change. BC schools have been for many years now held hostage by the scores on the Foundation Skills Assessment (FSA), a test given to all 4th and 7th grade students in the province. Support for the FSA has been eroding over the past several years with a chorus of skeptical teacher, school administrator and school trustee voices.

One initiative, The Great Schools Project, has been developing alternative ideas about school evaluation. The website gives s sense of the GSP platform and a bit more information about the issues can be heard in a segment of a local radio talk show.

Students evaluate teachers

While the strategy of students evaluating professors is common in higher education, this approach is rare in K-12 education. One component of the Measures of Effective Teaching Project at Harvard is just such data. Based on a a decade old survey developed by Ronald Ferguson, an economist at Harvard, a shorter survey had been developed that asks students to describe their classroom instructional climate. Importantly, students (all the way from Kindergarten through high school) are not asked to judge their teachers, but to provide a description of what the classroom environment looks and feels like to them. The survey includes the following kinds of questions:

Caring about students (Encouragement and Support)

> Example: The teacher in this class encourages me to do my best.”

Captivating students (Learning Seems Interesting and Relevant)

> Example: “This class keeps my attention – I don’t get bored.”

Conferring with students (Students Sense their Ideas are Respected)

> Example: “My teacher gives us time to explain our ideas.”

Controlling behavior (Culture of Cooperation and Peer Support)

> Example: “When I am confused, my teacher knows how to help me understand.”

Challenging students (Press for Effort, Perseverance and Rigor)

> Example: “My teacher wants us to use our thinking skills, not just memorize things.”

Consolidating knowledge (Ideas get Connected and Integrated)

> Example: “My teacher takes the time to summarize what we learn each day.”

A recent story in The Atlantic the results are summarized.

The most refreshing aspect of Ferguson’s survey might be that the results don’t change dramatically depending on students’ race or income… But overall, even in very diverse classes, kids tend to agree about what they see happening day after day.

Whether these data should be used in teacher evaluation requires careful consideration, but from a larger evaluative perspective what this demonstrates is the very valuable data that those who are meant to benefit most from programs and interventions can provide. If you ask the right questions, and if you respect their experiences and perspectives.

Purpose of evaluation

This is a pre-publication version of an entry in the International Encyclopedia of Education, 3rd Edition. Please note the correct citation in the text and refer to the final version in the print version of the IEE.

Mathison, S. (2010). The purpose of evaluation. In P. Peterson, B. McGaw & E. Baker (Eds.). The International Encyclopedia of Education, 3rd ed. Elsevier Publishers.

ABSTRACT

There are two primary purposes of evaluation in education: accountability and amelioration. Both purposes operate at multiple levels in education from individual learning to bounded, focused interventions to whole organizations, such as schools or colleges. Accountability is based primarily on summative evaluations, that is, evaluations of fully formed evaluands and are often used for making selection and resource allocation decisions. Amelioration is based primarily on formative evaluation, that is, evaluations of plans or developing evaluands and are used to facilitate planning and improvement. Socio-political forces influence the purpose of evaluation.

NEPC brief on parent trigger laws: MISSING THE TARGET? THE PARENT TRIGGER AS A STRATEGY FOR PARENTAL ENGAGEMENT AND SCHOOL REFORM

With the impending release of the movie Won’t Back Down, NEPC authors provide a critique of parent trigger laws.

There is so much that is wrong headed about these laws and the NEPC brief does a nice job of touching on the major points: the misconstrual of parent involvement as the primary key to school reform; the representation of the laws as a grassroots movement when it is funded and promoted by think tanks (DfER, Parent Revolution, and StudentsFirst) and foundations (Gates, Broad, Walton Foundations) who are often right wing; and conflates parent control of schools with the promotion of charter schools as the only alternative to public schooling.

Mathison keynotes BC Teacher Federation Annual General meeting

The full story is in the Teacher, the BCTF monthly news magazine.

teacher evaluation: a meta-evaluation of 10 approaches

The emphasis on assessing student learning through large scale standardized and high stakes testing programs has not been an effective strategy for school reform and improvement. No real surprise there. Reform has turned on teacher performance, often guaged by the very tests that are so fraught with socio-political and technical problems. Too often moving onto another strategy is an ideological move with precious little evidence one way or the other. While there are problems with this study that looks at how teacher evaluation is conceptualized and conducted in these 10 places, the effort to engage in evaluation of the evaluations is laudable.

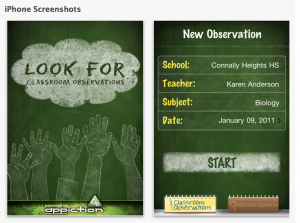

technology and evaluation

There can be no doubt that technology can make the work of evaluation easier and the array of software and applications is evergrowing. Check out the AEA365 blog and search for technology related posts… there are plenty. The challenge for evaluators will be thoughtful use of technology and avoiding technology driven evaluation practices. One of the best examples of technology driving and structuring thinking, knowledge construction and presentation is Powerpoint… Microsoft has created simple software that too often control what counts as information. Edward Tufte’s critique of ppt, PowerPoint Does Rocket Science–and Better Techniques for Technical Reports, is required reading for anyone who has ever or will ever use powerpoint.

Today Apple revealed the availability of, Look For, an iphone app for recording classroom observations of teaching and it is marketed as a tool for teacher evaluation. With a quick click (and some added notes if you like) principals can record whether teachers are “making subject matter meaningful” or “facilitating the learning process.” The promo for Look For says the app has the following features:

-Create unlimited observations

-Sort observations by school, teacher, subject and date

-Select from hundreds of qualification points within 6 basic categories

-Easily email and share reports and progress instantly

-Track teacher progress through each of the 6 instructional categories

-Supports state and national standards

Everyone wants technology to make their lives and jobs easier, and principals are no exception. But is this like ppt? An app that pre-defines and standardizes what counts as good teaching and limits sensitivity to context may be time saving, but does it promote good evaluation? Establishing criteria is key to good evaluation, but this is and ought to be a slippery part of the process… we cannot and should not know all of the relevant criteria a priori and we ought to be open to recognizing good and bad making attributes of teaching in situ. Principals and teachers need to be able to recognize and acknowledge what is not easily or necessarily captured by the 6 instructional categories.

So maybe Look For is a good app, but only if used in a critical way… true for all technology.

capitalist Bill Gates may do what other capitalists could not

If you doubt that neo-liberalism dominates the educational reform landscape take a look at this NYT story, which does a nice job of following the Gates Foundation money and how the spending has influenced the adoption of national curriculum standards, worked against teacher unions, and infiltrated school districts, think tanks and even the unions. This strategy is not new and is exactly what right wing Christian groups have done to influence schools by getting elected/appointed to school board positions. In both cases, there is something unsavory about the subterfuge, the lack of transparency, the buying of influence… as opposed to public deliberations about the schools we want and how to get them.

getting to formative

Just as it makes little sense to talk about the validity of a test, it makes little sense to talk about a formative test. Although there is a good emphasis on formative assessment of student learning, there is an unfortunate confusion about what the formative means. Too often the instrument is identified as formative, when in fact it is how the information from the instrument is used that makes the evaluation formative. The same test and the results of that test can be used either formatively or summatively. Just as the test is not valid (it is the inferences that are made that have or lack validity), neither is the test itself formative or summative. Popham has a nice little discussion of this in his Ed Week piece Formative Assessment–A Process, Not a Test.

Follow

Follow