Spatial Invariance

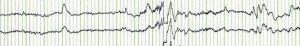

We essentially scan the waves with a motion detector and assign a score to each run of waves, indicating the likelihood the subject is lifting, grasping or holding.

CNNs systematize this idea of spatial invariance, exploiting it to learn useful representations with fewer parameters.

What does it mean by “Convolutional”? and why should we care?

While previously, we might have required billions of parameters to represent just a single layer in an data-processing network, we now typically need just a few hundred, without altering the dimensionality of either the inputs or the hidden representations. The price paid for this drastic reduction in parameters is that our features are now translation invariant and that our layer can only incorporate local information, when determining the value of each hidden activation. All learning depends on imposing inductive bias. When that bias agrees with reality, we get sample-efficient models that generalize well to unseen data. But of course, if those biases do not agree with reality, e.g., if data turned out not to be translation invariant, our models might struggle even to fit our training data.

Continue reading “FIRST E.A.D: Convolutional Neural Networks (CNN)”