07/17/23

05/20/23

05/20/23

03/4/23

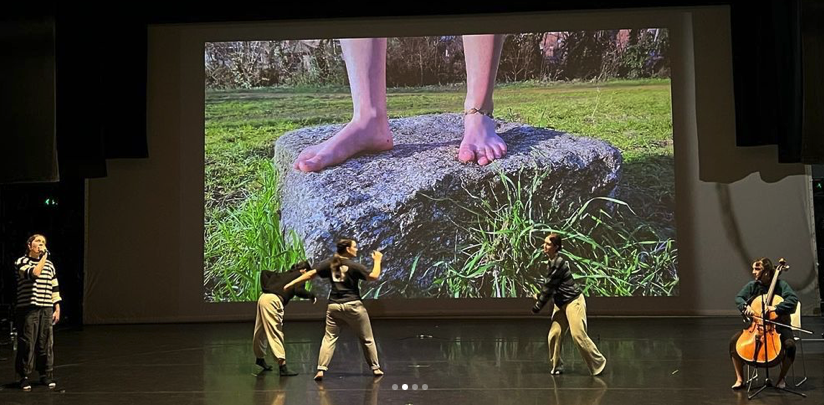

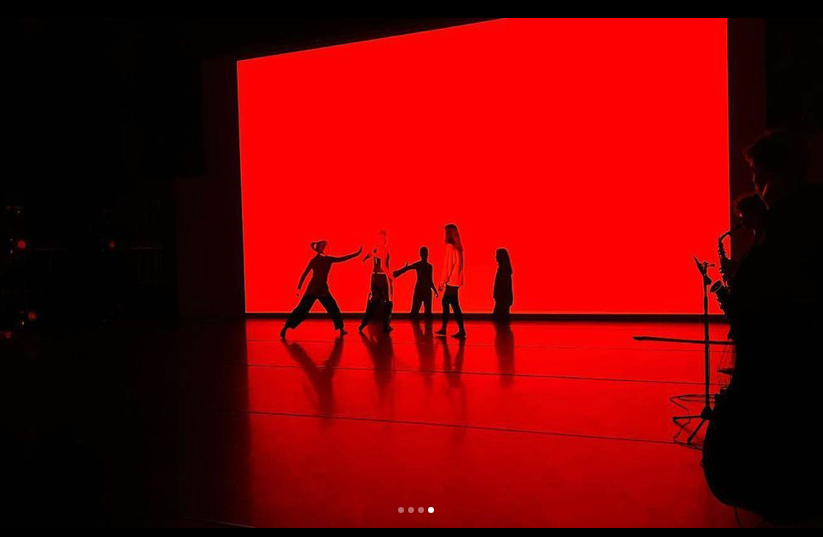

Trinity Laban Conservatoire (London): CoLab — Feb. 13 – 17, 2023

A week of working with music and dance students — in the three pieces we were able to use the TaSTE on-body lighting (Personal Environment for Audio Responsive Lighting — PEARL), the KiCASS tracking, and two SHRUGs.

|

|

|

a/taste/files/2023/03/Screen-Shot-2023-03-04-at-5.50.40-PM.jpg” alt=”” width=”825″ /> a/taste/files/2023/03/Screen-Shot-2023-03-04-at-5.50.40-PM.jpg” alt=”” width=”825″ /> |

03/4/23

12/8/22

12/2/22

12/2/22

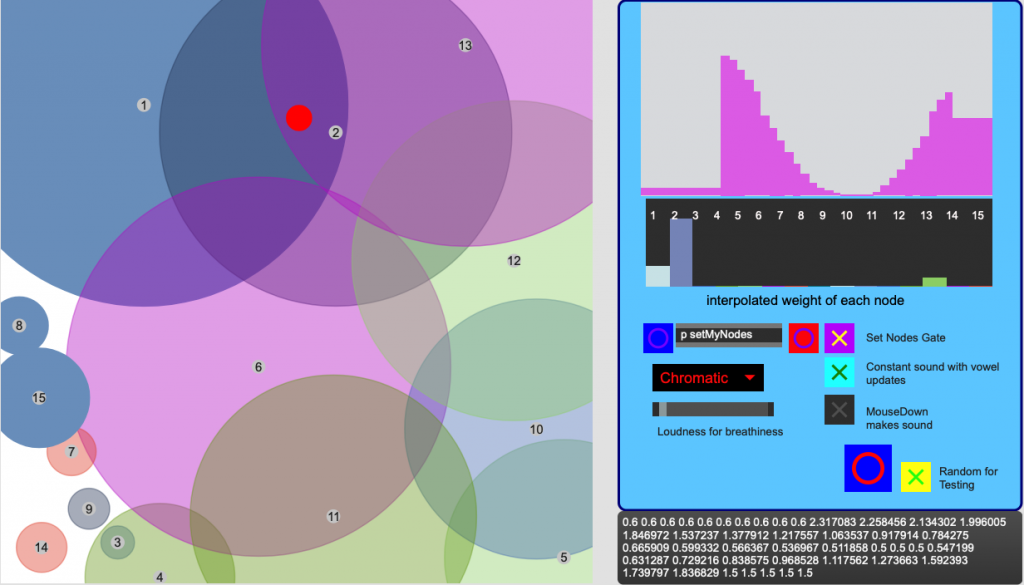

Welcome to Paradise scrub test

Danielle Lee testing the scrubbing response with right hand tracking, and left hand syllable triggers

11/24/22

11/19/22

Follow

Follow