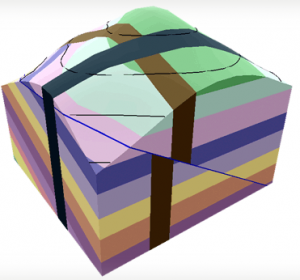

Visible Geology (app.visiblegeology.com) is an interactive tool for building, modifying and exploring 3D geological structures. Features include adding, removing and adjusting Geologic Beds, Geologic Folds, Faults, Domes & Basins, Dikes, Topography, Cross-Sections, Boreholes, and Strike Decals.

Visible Geology (app.visiblegeology.com) is an interactive tool for building, modifying and exploring 3D geological structures. Features include adding, removing and adjusting Geologic Beds, Geologic Folds, Faults, Domes & Basins, Dikes, Topography, Cross-Sections, Boreholes, and Strike Decals.

Here in EOAS the tool has been used in several courses. Students in the general science course EOSC110, The Solid Earth: A Dynamic Planet use it as a homework exercise to build skills necessary for an awesome follow-up exercise run using worksheets and small groups in the classroom. It involves interpreting the large-scale geological map of the state of Wyoming in terms of geological structures and tectonics of the region.

Getting first year and non-science students to productively interpret ordinary maps is difficult, let alone geological maps! The Visible Geology homework exercise is the second in a three-part activity sequence. It involves self-directed completion of a worksheet followed by an online quiz to test the new geological map interpretation skills. The quiz includes 7 quantitative and qualitative feedback questions.

The success that students demonstrate at 3D thinking and geology map interpretation in the capstone activity is a testament to the benefits of practicing these expert-like skills using Visible Geology. It also reflects the pedagogic expertise of those who developed this 3-part sequence: Brett Gilley and Lucy Porritt.

- The VG homework exercise can be seen here vg-exercise-v5.

- The follow-up online questions posed (without answers) can be seen here vg-questionset-prn.

Further details including analyzed results and feedback from the first use of the VG exercise can be obtained from the F. Jones.