About a week ago, my colleague Cyn Heiner (@cynheiner) and I ran an all-morning-and-into-the-afternoon workshop on effective peer instruction using clickers. I wrote about preparing for the workshop so it’s only fitting that I write this post-mortem.

If “post-mortem” sounds ominous or negative, well, the workshop was okay but we need to make some significant changes. For all intents and purposes, the workshop we delivered is, indeed, dead.

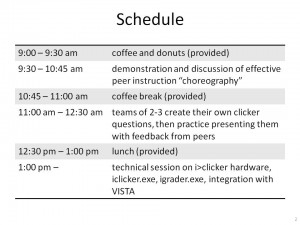

This was our (in hindsight, ambitious) schedule of events:

Schedule for our workshop, "Effective peer instruction using clickers."

The first part, demonstrating the “choreography” of running an effective peer instruction episode, went pretty well. The participants pretend to be students, I model the choreography for 3 questsions while Cyn does colour commentary (“Did you notice? Did Peter read the question aloud? No? What did he do instead.”) The plan was, after the model instruction, we’d go back and run through the steps I took, justifying each one. It turned out, though, that the workshop participants were more than capable of wearing both the student hat and the instructor hat, asking good questions about what I was doing (not about the astronomy and physics in the questions). By the time we got to the end of the 3rd question, they’d asked all the right questions and we’d given all the justification.

We weren’t agile enough, I’m afraid, to then skip the next 15 minutes of ppt slides when we run through all the things I’d done and why.

Revised workshop: address justification for steps as they come up, then very briefly list the steps at the end, expanding only on the things no one asked about.

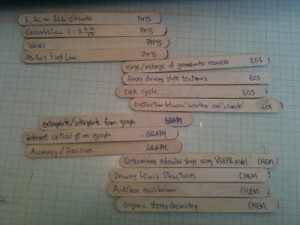

In the second part of the workshop, we divided the participants into groups of 2-3 by discipline — physics, chemistry, earth and ocean sciences — and gave them a topic about which they should make a question.

We wrote the topics on popsicle sticks and handed them out. This worked really well because there was no time wasted deciding on the concept the group should address.

We’d planned to get all those questions into my laptop by snapping webcam pix of the pages they’d written, and then have each group run an episode of peer instruction using their own question while we gave them feedback on their choreography. That’s where things went to hell in a handcart. Fast. First, the webcam resolution wasn’t good enough so we ended up scanning, importing smart phone pix, frantically adjusting contrast and brightness. Bleh. Then, the questions probed the concepts so well, the participants were not able to answer the questions. Almost every clicker vote distribution was flat.

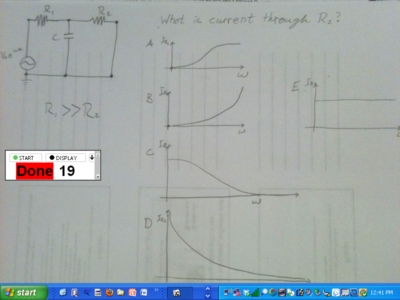

One group created this question about circuits. A good enough question, probably, but we couldn't answer it in the workshop.

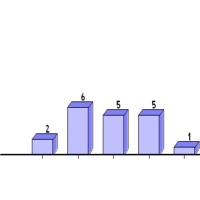

These are the votes for choices A-E in the circuits question. People just guessed. They are not prepared to pair-and-share so the presenter did not have the opportunity to practice doing that with the "students."

The presenters had no opportunity to react to 1 overwhelming vote or a split between 2 votes or any other distribution where they can practice their agility. D’oh! Oh, and they never got feedback on the quality of their questions — were the questions actually that good? We didn’t have an opportunity to discuss them.

We were asking the participants to create questions, present questions, answer their colleagues’ questions AND assess their colleagues’ peer instruction choreography. And it didn’t work. Well, d’uh, what were we thinking? Ahh, 20/20 hindsight.

With lots of fantastic feedback from the workshop participants, and a couple of hours of caffeine-and-scone-fueled brainstorming, Cyn and I have a new plan.

Revised workshop: Participants, still in groups of 2-3, study, prepare and then present a clicker question we created ahead of time.

We’ll create general-enough-knowledge questions that the audience can fully or partially answer, giving us a variety of vote distributions. Maybe we’ll even throw in some crappy questions, like one that way too easy, one with an ambiguous stem so it’s unclear what’s being asked, one with all incorrect choices… We’d take advantage of how well we all learn through contrasting cases.

To give the participants feedback on their choreography, we’ll ask part of the audience to not answer the question but to watch the choreography instead. We’re thinking a simple checklist will help the audience remember the episode when the time comes to critique the presentation. And that list will reinforce to everyone what steps they should try to go through when running an effective peer instruction episode.

The participants unanimously agreed they enjoyed the opportunity to sit with their colleagues and create peer instruction questions. Too bad there wasn’t much feedback, though. Which leads to one of the biggest changes in our peer instruction workshop

2nd peer instruction workshop: Creating questions

We can run another workshop, immediately after the (New) Effective peer instruction or stand-alone, about writing questions. We’re still working out the details of that one. My first question to Cyn was, “Are we qualified to lead that workshop? Shouldn’t we get someone from the Faculty of Education to do it?” We decided we are the ones to run it, though:

- Our workshop will be about creating questions for physics. Or astronomy. Or chemistry. Or whatever science discipline the audience is from. We’ll try to limit it to one, maybe two, so that everyone is familiar enough with the concepts that they can concentrate on the features of the question.

- We’ve heard from faculty that they’ll listen to one of their own. And they’ll listen to a visitor from another university who’s in the same discipline. That is, our physicists will listen to a physicist from the University of Somewhere Else talking about physics education. But our instructors won’t listen to someone from another faculty who parachutes in as an “expert.” I can sort of sympathize. It’s about the credibility of the speaker.

Not all bad news…

Cyn and I are pretty excited about the new workshop(s). Our bosses have already suggested we should run them in December, targeting the instructors who will start teaching in January. And I got some nice, personal feedback from one of the participants who said he could tell how “passionate I am about this stuff.”

And, most importantly, there’s a physics and astronomy teaching assistants training workshop going on down the hall. It’s for TA’s by TA’s and many of the “by TA’s” were at our workshop. Now they’re training their peers. These people are the future of science education. I’m proud to be a part of that.

Pingback: Peer instruction workshop: the post-mortem | Science Edventures | My Blog