Contact: Pritam Dash, pdash@ece.ubc.ca

Protecting Autonomous Robotic Vehicles from Attacks

Autonomous Robotic Vehicles (RV) such as drones, and rovers have been shown vulnerable to attacks that maliciously perturb sensor measurements through physical means. Common physical attacks against RVs are GPS spoofing by transmitting false GPS signals, gyroscope and accelerometer tampering through acoustic noise injection. Physical attacks can have severe consequences such as crashing the RV, or significantly deviating it from its course, resulting in mission failure.

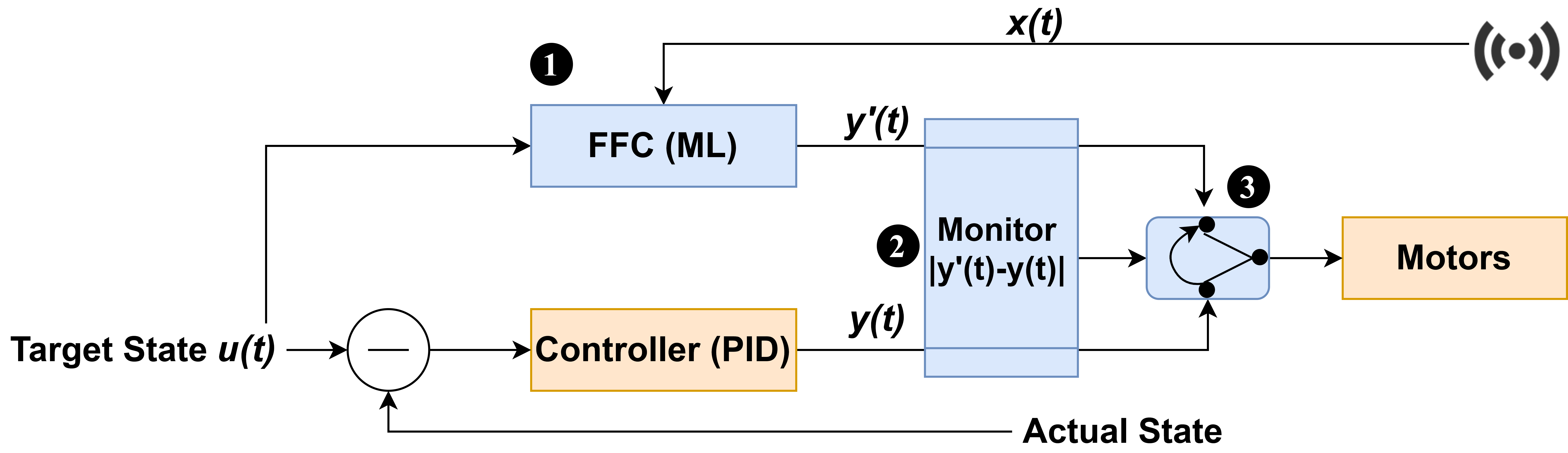

We propose PID-Piper, a framework to recover RVs from physical attacks. PID-Piper uses a Feedforward controller (FFC) in tandem with the PID controller. The FFC is built using LSTM model and trained to prevent attack induced trajectory errors from influencing the actuator signals. This means, the FFC predicts robust actuator signals even under attacks. Both the FFC and PID operate in tandem. When an attack is detected, we switch to FFC’s predictions, and once the attack subsides, we switch back to PID. By using PID and FFC in tandem our framework offers best of both worlds and protects RVs from fault and attacks. In addition, PID-Piper limits attackers’ capabilities in performing stealthy attacks (attacker knows the detection technique) and ensures mission success.

Videos showing PID-Piper in action PID-Piper Videos

Pritam Dash, Guanpeng Li, Zitao Chen, Mehdi Karimibiuki, and Karthik Pattabiraman, PID-Piper: Recovering Robotic Vehicles from Physical Attacks, IEEE/IFIP International Conference on Dependable Systems and Networks (DSN), 2021. (Acceptance Rate: 16.5%). [ PDF | Talk | Code]

Best Paper Award (1 of nearly 300 submissions).

Stealthy Attacks against Autonomous Robotic Vehicles

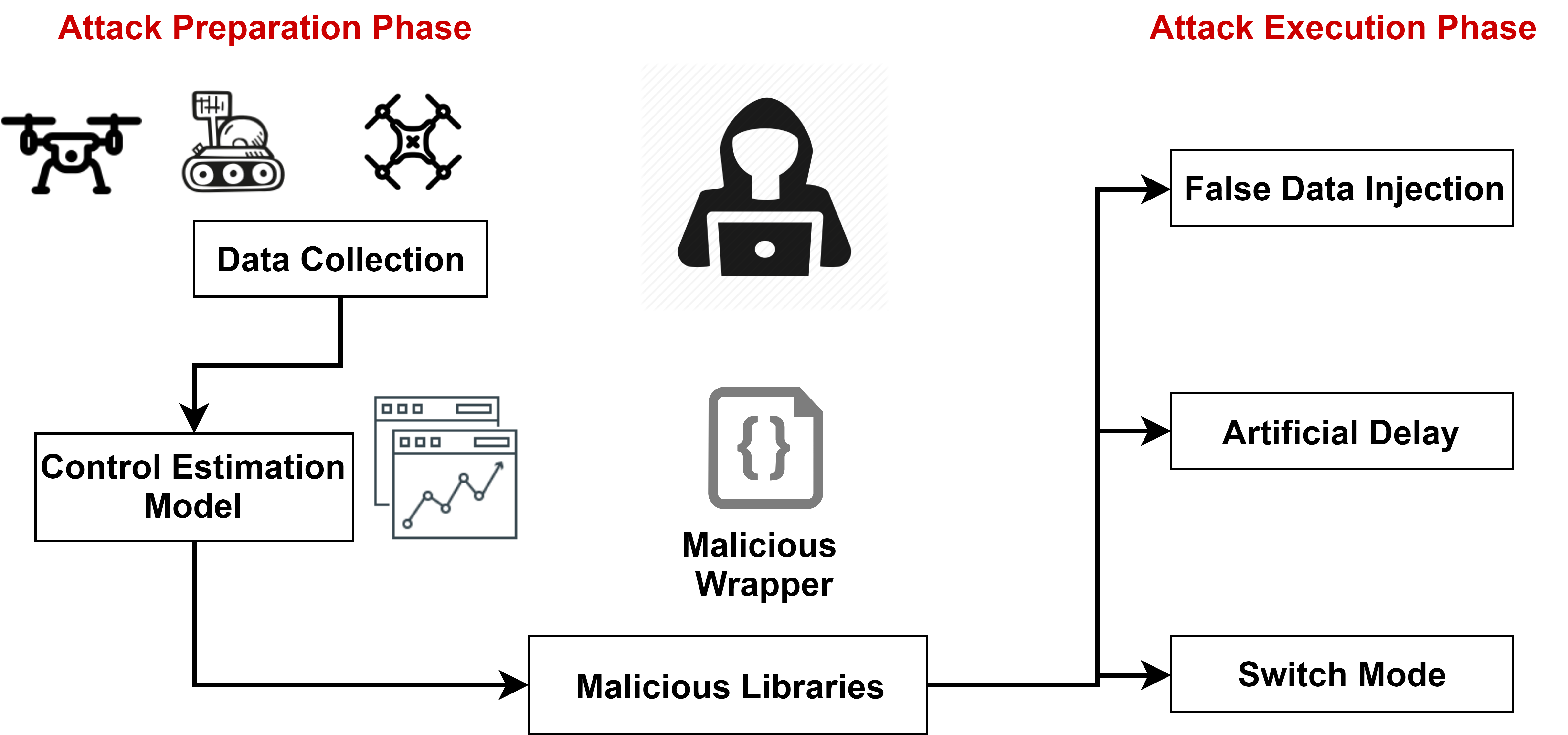

A common way of triggering attacks against RVs is through sensor tampering or spoofing. Because RVs inherently use control algorithms for minimizing faults, control-based invariant analysis techniques have been proposed to detect attacks against RVs. We evaluate the efficacy of control-based techniques in detecting stealthy attacks i.e., when the attacker knows the detection technique and detection parameters.

We propose three kinds of stealthy attacks that evade detection and disrupt RV missions. Our main insight is that due to model inaccuracies, control-based intrusion detection techniques have a high detection threshold, an attacker can learn the thresholds, and consequently perform targeted attacks against the RV. We demonstrate the attacks on eight RV systems including three real vehicles, in the presence of an Intrusion Detection System (IDS) using control-based techniques to monitor RV’s runtime behavior and detect attacks. We find that the control-based techniques are incapable of detecting the stealthy attacks. Our findings show that, using inaccurate models for invariant analysis for non-linear cyber-physical systems such as RVs, opens new vulnerabilities that can be exploited to perform stealthy attacks.

Videos showing the stealthy attacks in real RV systems.

This work appeared in the media Eureka alert, TechXplore, Globalnews, Market Associates, Helpnet, SERENE-RISC digest

Pritam Dash, Mehdi Karimibiuki, and Karthik Pattabiraman, Out of Control: Stealthy Attacks Against Robotic Vehicles Protected by Control-based Techniques, Annual Computer Security Applications Conference (ACSAC), 2019. (Acceptance Rate: 22.6%) [ PDF | Talk | Code]

Pritam Dash, Mehdi Karimibuiki, and Karthik Pattabiraman, Stealthy Attacks Against Robotic Vehicles Protected by Control-based Intrusion Detection Techniques, ACM Journal on Digital Threats: Research and Practice (DTRAP). Acceptance Date: August 2020. [ PDF ]